Nvidia’s DreamDojo Trains Robots with 44,711 Hours of Human Video, Redefining AI Simulation

- Dr. Jacqueline Evans

- 2 days ago

- 6 min read

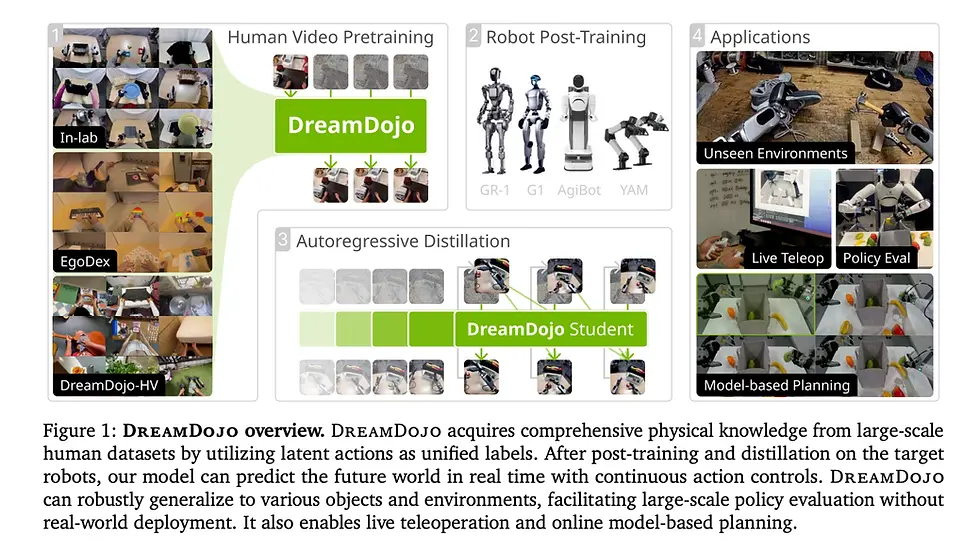

In the rapidly evolving landscape of artificial intelligence, the integration of AI with robotics has emerged as a transformative frontier. Nvidia, a long-established leader in high-performance computing and AI acceleration, has recently unveiled DreamDojo, an open-source, interactive world model that promises to redefine how robots learn and interact with the physical world. By leveraging tens of thousands of hours of human video, DreamDojo enables robots to acquire generalizable knowledge about physics and object manipulation without direct interaction, setting a new benchmark for robotics research and enterprise applications.

Understanding the DreamDojo Model

DreamDojo represents a paradigm shift from traditional robotics simulators, which rely heavily on hand-engineered physics engines, 3D modeling, and painstaking calibration of robot-specific datasets. Instead, DreamDojo uses “Simulation 2.0”, a system capable of predicting future states of the environment directly in pixels, effectively bypassing conventional engines or meshes.

At its core, the system leverages a two-phase training methodology:

Pre-training on Human Video – The model is trained on 44,711 hours of first-person egocentric human video captured across 9,869 unique scenes, covering over 6,015 tasks and 43,237 objects. This dataset, known as DreamDojo-HV, provides robots with foundational knowledge of general physical interactions, enabling them to predict how objects behave and how humans manipulate them in real-world contexts.

Post-training on Robot-Specific Actions – After acquiring general physical knowledge, DreamDojo undergoes post-training tailored to specific robotic hardware. This step aligns latent human-derived actions with a robot’s motor capabilities, effectively bridging the gap between observing humans and executing actions autonomously.

Jim Fan, Director of AI and Distinguished Scientist at Nvidia, emphasizes that DreamDojo separates “how the world looks and behaves” from “how this particular robot actuates,” allowing the model to generalize across robot types without requiring massive robot-specific datasets.

Latent Action Representation: From Human Motion to Robot Control

A major technical challenge in using human video data is the absence of direct robot motor commands. DreamDojo addresses this through latent actions, a unified representation derived from consecutive video frames via a spatiotemporal Transformer Variational Autoencoder (VAE).

Encoding Motion: The VAE takes two consecutive frames and generates a 32-dimensional latent vector, representing the most critical physical changes between frames.

Hardware Agnostic: By disentangling action from visual context, the system enables robots of different architectures to interpret the same latent actions, allowing knowledge learned from humans to transfer effectively to multiple robotic platforms.

Scalability: This representation allows pre-training on human video at massive scale, eliminating the bottleneck of costly robot-specific data collection.

This approach leverages the natural proficiency of humans in manipulating complex objects, such as pouring liquids, folding clothes, or stacking irregular items, and converts these insights into actionable guidance for robots.

Architectural Innovations for High-Fidelity Robotics Simulation

DreamDojo’s architecture is built on the Cosmos-Predict2.5 latent video diffusion model with temporal compression via the WAN2.2 tokenizer, optimized for both visual fidelity and accurate physics modeling. Key architectural improvements include:

Relative Actions: The model represents actions as deltas rather than absolute poses, improving generalization across varied trajectories.

Chunked Action Injection: Four consecutive latent actions are injected per frame to align with temporal compression, preserving causality and reducing prediction errors.

Temporal Consistency Loss: A loss function ensures predicted frame velocities match ground-truth transitions, minimizing visual artifacts and maintaining object stability.

Additionally, a Self-Forcing Distillation Pipeline accelerates model inference, reducing denoising steps from 35 to 4, achieving real-time performance at 10.81 frames per second for continuous 60-second rollouts. This enables interactive applications such as live teleoperation, model-based planning, and policy evaluation without the need for physical robot trials.

Downstream Applications and Real-World Impact

DreamDojo’s high-fidelity simulations and real-time capabilities unlock multiple critical applications for robotics engineering:

Reliable Policy Evaluation: Robots can be benchmarked safely in simulated environments, reducing the risk and cost of trial-and-error experiments. The system demonstrates a Pearson correlation of 0.995 with real-world outcomes and a Mean Maximum Rank Violation (MMRV) of 0.003, underscoring the simulator’s accuracy.

Model-Based Planning: Robots can simulate multiple action sequences, enabling them to select optimal strategies. In a fruit-packing scenario, this approach improved real-world success rates by 17%, providing a 2x increase in efficiency compared to random action sampling.

Live Teleoperation: Developers can interact with virtual robots in real time using VR controllers, collecting safe and high-quality data for model refinement. Demonstrations have included robots such as GR-1, G1, AgiBot, and YAM humanoid robots, performing realistic object manipulations across diverse settings.

Enterprise Simulation: Organizations evaluating humanoid robots can simulate factory-floor operations extensively before committing to costly physical deployments. By training on 44,000+ hours of diverse human video, DreamDojo equips robots with generalizable “common sense” about physics and object interaction, addressing variability in real-world industrial environments.

Implications for the Robotics Industry

The release of DreamDojo comes at a pivotal moment in the robotics sector, with AI-driven automation becoming increasingly central to enterprise and manufacturing strategies. CEO Jensen Huang has highlighted robotics as a “once-in-a-generation” opportunity, with global AI infrastructure investments expected to reach $660 billion in 2026 alone.

Robotics startups saw record investment in 2025, totaling $26.5 billion, while major industrial players such as Siemens, Mercedes-Benz, and Volvo have announced strategic robotics initiatives. Tesla has projected that 80% of its future value will stem from Optimus humanoid robots. DreamDojo, by enabling rapid simulation and safer testing, lowers barriers for enterprise adoption of advanced robotic systems.

Expert observations suggest that the ability to pre-train robots using human-centric video data could accelerate industrial automation timelines, reduce operational costs, and improve safety outcomes. As Michael Nuñez from VentureBeat notes, DreamDojo’s generalizable world model allows robots to adapt to previously unseen objects and environments, bridging the gap between laboratory demonstrations and complex real-world deployments.

Comparison to Traditional Robot Training

Feature | Traditional Simulation | DreamDojo |

Data Requirement | Robot-specific datasets, manually collected | Human video dataset (44,711 hours), robot-agnostic |

Physics Modeling | Engine-based, manually encoded | Learned latent physical dynamics from video |

Real-Time Performance | Limited by computation, engine constraints | 10.81 FPS for 60-second rollouts |

Generalization | Often brittle, object/task-specific | Adaptable across robot hardware and environments |

Policy Evaluation | Risky, requires physical trials | Accurate simulated evaluation with high correlation to real-world outcomes |

The model’s reliance on human video as a proxy for robot experience not only reduces costs but also expands the scale and diversity of tasks robots can learn, overcoming one of the most significant bottlenecks in robotics AI.

Ethical Considerations and Open-Source Accessibility

Nvidia has committed to releasing all weights, code, post-training datasets, and evaluation benchmarks openly. This transparency allows researchers and organizations to post-train DreamDojo on custom robot data, accelerating innovation while promoting reproducibility.

From an ethical standpoint, training on human video data raises considerations regarding privacy and consent. DreamDojo’s design, however, abstracts video content into latent action representations, ensuring that personal identifiers are removed and that the model learns generalizable motion patterns rather than individual-specific behaviors.

Open-source accessibility aligns with broader trends in AI research, where collaborative development and shared datasets can accelerate advancements across academia, industry, and open research communities.

Future Prospects and Strategic Implications

DreamDojo illustrates Nvidia’s broader strategic pivot from gaming-focused computing to robotics and AI infrastructure. By combining high-performance GPU computing, generative models, and large-scale data, Nvidia is positioning itself as a key enabler of the next generation of intelligent robots.

Potential future developments include:

Scaling DreamDojo to larger video datasets to enhance physics intuition and task diversity.

Integrating multi-modal sensory inputs, such as audio and tactile feedback, to further improve realism and action fidelity.

Deploying DreamDojo in commercial robotics applications, from manufacturing and logistics to service robots in healthcare and retail.

Kyle Barr from Gizmodo observed that Nvidia now views traditional gaming as a “non-core” segment, emphasizing the company’s investment in AI robotics as the next frontier where chip performance and AI expertise converge.

Conclusion

Nvidia’s DreamDojo represents a transformative milestone in robotics, leveraging 44,711 hours of human video to teach robots generalizable, physics-informed behaviors. By combining latent action representations, high-fidelity simulation, and real-time interactive capabilities, DreamDojo addresses critical bottlenecks in robot training and enterprise deployment.

The system exemplifies the future of AI-driven robotics — one where robots can learn indirectly from human expertise, adapt to diverse environments, and accelerate the path from research prototypes to practical applications.

For continued insights into AI-driven robotics, automation, and industry-leading innovations, follow the expert team at 1950.ai. Their research and practical implementations complement developments like DreamDojo, providing a holistic view of AI’s transformative potential.

Comments