Intel TSNC: How AI Neural Compression Could Cut Game Texture Sizes by 90% and Transform Modern Gaming

- Chun Zhang

- Apr 7

- 7 min read

The gaming and graphics industry is entering a new era where artificial intelligence is not only used for upscaling, rendering, and image generation, but also for fundamental data optimization. One of the most significant developments in this space is Intel’s Texture Set Neural Compression (TSNC), a neural network based technology designed to drastically reduce game texture sizes while maintaining visual quality and performance.

Modern video games rely heavily on high-resolution textures to create realistic environments, characters, and lighting effects. However, as visual fidelity increases, storage requirements, memory consumption, and bandwidth demands continue to grow. Developers face constant pressure to balance performance, quality, and hardware limitations. TSNC presents a new approach to this challenge by introducing AI-driven compression that treats entire texture sets as a unified optimization problem rather than compressing individual textures independently.

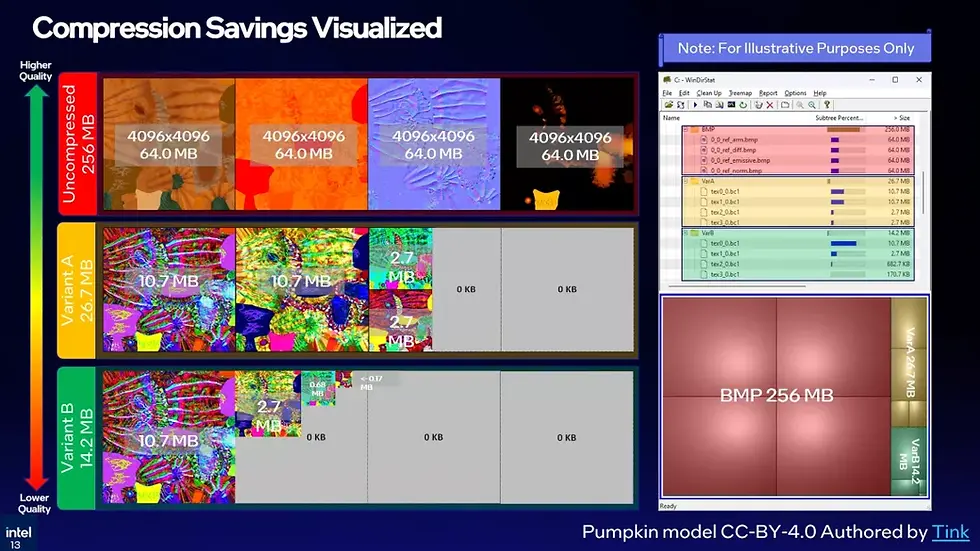

Intel’s TSNC technology demonstrates compression ratios of up to 18x, with minimal visual degradation, while also reducing VRAM usage, improving load times, and enhancing runtime performance. This development signals a major shift in how game assets may be stored, processed, and rendered in future gaming ecosystems.

The Growing Challenge of Texture Data in Modern Gaming

Game textures represent one of the largest components of modern game data. With the rise of 4K and 8K gaming, photorealistic environments, and real-time ray tracing, texture sizes have grown exponentially.

Key challenges developers face include:

Increasing game installation sizes, often exceeding 100 GB

Limited GPU VRAM availability in mid-range systems

High memory bandwidth requirements

Longer loading times and asset streaming delays

Storage and distribution constraints for digital platforms

Traditional block compression methods such as BC1 and BCn formats have been the industry standard for years. These techniques compress textures into smaller blocks while preserving visual fidelity. However, they operate on individual textures, limiting the potential compression efficiency across entire texture sets.

TSNC introduces a new paradigm by applying neural network based compression across groups of textures, enabling significantly higher compression ratios without fundamentally changing existing workflows.

Understanding Texture Set Neural Compression (TSNC)

Texture Set Neural Compression is designed as a neural layer on top of existing block compression schemes. Instead of replacing traditional compression formats, TSNC enhances them by integrating machine learning techniques into the pipeline.

The core concept is straightforward:

A neural network is trained on related textures

The textures are encoded into a shared latent space

Compressed data is stored using BC1 pyramid levels

A neural decoder reconstructs textures during runtime

This approach allows developers to compress entire texture sets more efficiently while maintaining compatibility with existing rendering pipelines.

The system uses a three-layer neural network, specifically a multilayer perceptron (MLP), to reconstruct texture data from compressed representations. The result is smaller texture files that load faster and consume less memory while maintaining acceptable visual quality.

How TSNC Works in Practice

The TSNC pipeline involves several stages of processing that optimize both storage and runtime performance.

Neural Encoding Process

The encoding process includes:

Training a neural network on millions of standardized textures

Creating a shared latent representation for related textures

Compressing this representation into BC1 pyramid levels

Storing compressed texture data efficiently

This allows the system to capture common patterns and redundancies across textures, significantly improving compression efficiency.

Neural Decoding and Runtime Reconstruction

During runtime:

The compressed latent representation is loaded

A neural decoder reconstructs the texture

The GPU processes the texture in real time

Rendering occurs with minimal latency

Intel reports that the system can produce the first texture pixel in approximately 0.194 nanoseconds on XMX accelerated GPUs, which is fast enough to avoid any noticeable rendering delay.

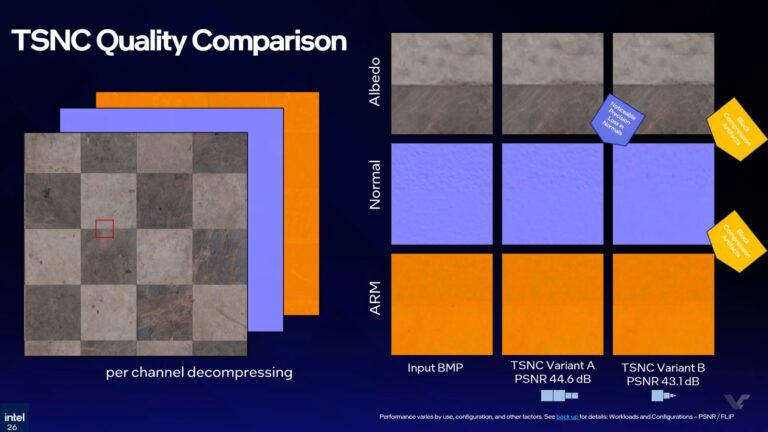

TSNC Variants and Compression Modes

Intel has introduced two variants of TSNC, each designed for different performance and quality requirements.

TSNC Variant A

Variant A focuses on maintaining high visual quality while achieving significant compression.

Key characteristics:

Up to 9x texture compression

Minimal visual quality loss

Approximately 5% perceptual quality reduction

Balanced performance and image fidelity

Suitable for high-end gaming environments

Variant A is positioned as the most practical solution for developers who want improved storage efficiency without sacrificing visual detail.

TSNC Variant B

Variant B is optimized for maximum compression efficiency.

Key characteristics:

Up to 18x texture compression

Higher performance gains

6 to 7% perceptual quality reduction

Some visible compression artifacts in normals and ARM data

Ideal for storage and bandwidth constrained environments

Variant B demonstrates how aggressive neural compression can dramatically reduce texture size while maintaining acceptable visual performance.

Performance Benchmarks and Hardware Acceleration

Intel tested TSNC using Panther Lake integrated graphics and Arc B390 GPU technology.

The benchmark results highlight the performance improvements:

Execution Path | Time Per Pixel | Performance |

FMA Path (CPU/GPU fallback) | 0.661 ns | Standard execution |

XMX Accelerated Path | 0.194 ns | 3.4x faster |

This performance improvement demonstrates the effectiveness of dedicated AI hardware in accelerating neural texture compression.

The XMX cores play a crucial role in enabling real-time texture reconstruction, ensuring that neural compression does not introduce latency or performance bottlenecks.

Benefits of Neural Texture Compression

TSNC offers multiple advantages across different stages of game development and deployment.

Reduced Game Installation Size

Neural compression can significantly reduce game file sizes, making distribution more efficient.

Benefits include:

Faster downloads

Lower storage requirements

Reduced patch sizes

Improved accessibility for users with limited bandwidth

This is particularly important in regions where internet speed and storage capacity are limited.

Lower VRAM Usage

TSNC reduces the amount of texture data loaded into GPU memory.

This leads to:

Better performance on mid-range GPUs

Increased stability in high-resolution gaming

Reduced memory bottlenecks

Improved multi-texture rendering

Lower VRAM usage enables developers to create more detailed environments without exceeding hardware limitations.

Improved Load Times

Smaller texture files mean faster asset loading and streaming.

Advantages include:

Faster game startup

Smooth texture streaming

Reduced stuttering

Better real-time rendering

This enhances the overall gaming experience and system responsiveness.

Optimized GPU Performance

AI-based decompression allows GPUs to handle texture reconstruction efficiently.

Key improvements:

Reduced bandwidth usage

Efficient shading operations

Improved rendering speed

Better power efficiency

This aligns with the industry trend toward AI-assisted graphics processing.

Comparison with Traditional Compression Methods

The difference between TSNC and traditional BC compression can be understood through a comparative analysis.

Feature | Traditional BC Compression | TSNC Neural Compression |

Compression Approach | Individual textures | Texture set optimization |

Compression Ratio | Around 4.8x | Up to 18x |

VRAM Usage | Moderate | Lower |

Performance Impact | Minimal | Improved with XMX |

Visual Quality | Stable | Slight perceptual loss |

AI Integration | None | Neural network based |

This comparison shows how TSNC represents a significant advancement in texture optimization technology.

Industry Implications for Game Developers

TSNC has the potential to reshape game development workflows and asset management strategies.

Developers may benefit from:

Smaller texture libraries

Faster asset pipelines

Reduced storage costs

Improved performance optimization

Better cross-platform compatibility

The ability to integrate TSNC into existing BC-based pipelines makes adoption easier for development studios.

Intel’s plan to release TSNC as a standalone SDK further supports integration across different game engines and development environments.

Competitive Landscape in Neural Texture Compression

Intel is not the only company exploring neural compression technologies. The graphics industry is increasingly investing in AI-driven optimization.

Key trends include:

Neural rendering techniques

AI-based upscaling

Intelligent asset compression

Machine learning assisted shading

GPU acceleration for neural workloads

Nvidia has also explored neural compression methods with high compression ratios, indicating a broader industry shift toward AI-based data optimization.

This competitive landscape suggests that neural compression may become a standard feature in future graphics architectures.

Future Outlook of TSNC and AI-Driven Graphics

The introduction of TSNC indicates a broader shift toward AI-native graphics pipelines.

Potential future developments include:

Fully neural texture streaming systems

AI-optimized game engines

Real-time neural asset reconstruction

Cloud-based texture compression

Cross-platform neural rendering

Intel’s roadmap includes:

Alpha SDK release later this year

Beta version development

Full public release in the future

As GPU architectures continue to evolve, neural compression technologies like TSNC could become standard components of gaming and graphics systems.

Challenges and Considerations

Despite its potential, TSNC also presents several challenges.

Visual Quality Trade-offs

Higher compression ratios may introduce:

Texture artifacts

Precision loss in normals

Slight perceptual degradation

Developers must balance compression and visual fidelity based on their game requirements.

Hardware Dependency

TSNC performs best with XMX accelerated GPUs.

This raises questions about:

Compatibility with older hardware

Cross-platform support

Optimization for non-Intel GPUs

Ensuring universal compatibility will be crucial for widespread adoption.

Implementation Complexity

Neural compression requires:

Training datasets

Integration into pipelines

GPU optimization

Runtime testing

Studios will need to invest in technical expertise to fully utilize TSNC.

Strategic Importance for the Gaming Industry

TSNC represents more than just a compression technology. It signals a shift toward intelligent data optimization in gaming.

Strategic impacts include:

Reduced development costs

Improved performance scalability

Enhanced gaming accessibility

Better resource utilization

Future-ready graphics infrastructure

This innovation highlights the growing role of AI in shaping the future of digital entertainment and computing.

Conclusion

Intel’s Texture Set Neural Compression technology represents a major advancement in AI-driven graphics optimization. By achieving up to 18x texture compression with minimal quality loss, TSNC addresses some of the most pressing challenges in modern gaming, including storage limitations, VRAM constraints, and performance efficiency.

The introduction of neural compression marks a significant step toward AI-native rendering pipelines, where machine learning enhances every aspect of graphics processing. With strong performance benchmarks, flexible compression variants, and compatibility with existing BC-based workflows, TSNC has the potential to become a foundational technology in future game development.

As the gaming industry moves toward more complex and data-intensive environments, innovations like TSNC will play a crucial role in ensuring efficient, scalable, and high-performance graphics systems. The expert team at 1950.ai continues to analyze emerging technologies like neural compression, artificial intelligence in GPUs, and next-generation computing systems to provide deep insights into the future of digital infrastructure and intelligent systems.

For deeper expert analysis and strategic technology insights, readers can explore research and perspectives from Dr. Shahid Masood and the 1950.ai research team, who regularly examine transformative innovations shaping the future of artificial intelligence, computing, and global technological ecosystems.

Further Reading / External References

Intel Texture Set Neural Compression Overview: https://www.techpowerup.com/348013/intel-texture-set-neural-compression-shrinks-textures-by-up-to-18x-with-minimal-quality-loss

Intel TSNC Technology Details and Compression Variants: https://videocardz.com/newz/intel-shows-texture-set-neural-compression-claims-up-to-18x-smaller-texture-sets

Intel AI Compression Technology Analysis: https://www.techspot.com/news/111970-intel-new-ai-compression-tech-can-significantly-shrink.html

Comments